|

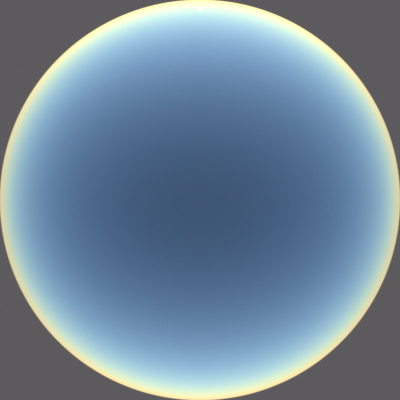

| With aerosols. Solar elevation angle 4°. |

|

| No aerosols. Solar elevation angle 4°. Same exposure as the image above. |

I made a first pass at adding

aerosols to the atmosphere. Aerosols have a significant impact on the appearance of the sky, but simulating them isn't particularly straightforward: the types and levels of aerosols vary widely by region (e.g., city vs. forest vs. ocean) and other factors, and computing their scattering properties is quite complicated.

For the scattering phase function, for now I just used the Henyey-Greenstein phase function, which I had already implemented for Photorealizer, including analytic scattering direction sampling. For now I used a constant asymmetric parameter of 0.7 (which means that the mean cosine of the scattering angle is 0.7, which implies strong forward scattering), which seems to be a pretty good average value based on my research. I plan to implement Cornette and Shanks's modified version of the Henyey-Greenstein phase function, which better approximates actual Mie scattering phase functions and converges to the Rayleigh phase function as the asymmetry parameter approaches zero.

I used a constant single scattering albedo of 0.9, which, like my asymmetry parameter, seems to be a pretty good average value based on my research. At the sampled extinction distance, I scatter the photon/ray with 90% probability, and absorb it with 10% probability.

I changed the Earth's surface to have an albedo of 31%, which seems to be the accepted average albedo of Earth's surface. At some point, I would like to make a procedural ground, or use a map of the actual Earth. Procedural mountains would be particularly nice for showing off atmospheric effects.

My aerosols system could be improved in many ways. In particular, I could use a realistic particle size distribution, use realistic particle type proportions (each type having a certain index of refraction, with real and imaginary parts), and then compute scattering and absorption properties using the

Mie solution to Maxwell's equations. That sounds like it might be overkill for now, although I'm sure I would learn a lot in the process.